How to interpret the P-value

So you've done a statistical test, got an estimate, a standard error and a P-value. How do you know when there's enough evidence for you to start thinking the effect might really be there? This part of the tutorial will walk you through the main ways to think about this problem.

Example

For example, in our linear regression example we observed a possible association between the

genotypes of a SNP in the gene ATP2B4 and expression of ATP2B4. The fitted coefficients (including a covariate, stage)

were:

Estimate Std. Error Pr(>|t|)

(Intercept) 0.0447 0.0165 0.0131

dosage 0.0392 0.0109 0.0017

stagefetal -0.0477 0.0124 0.0009

So the estimate of the effect of the genotype dosage is 0.0392, and the P-value is 0.0017. Is this enough evidence to conclude that the effect is nonzero?

A sanity check

Before getting started - note that by even asking this question we are making a key assumption - that it's sensible to try to discern 'true' effects from 'zero' effects.

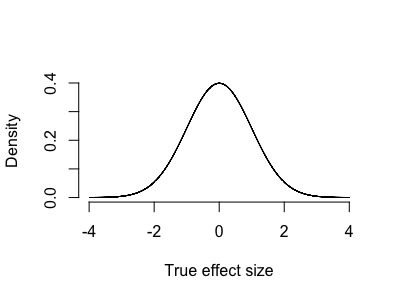

Suppose genetic effects on expression actually follow a continuous distribution - say, a gaussian-like distribution where there are many small effects and a few larger ones:

Here essentially all effects are nonzero. It is pointless to ask whether one of them might be nonzero.

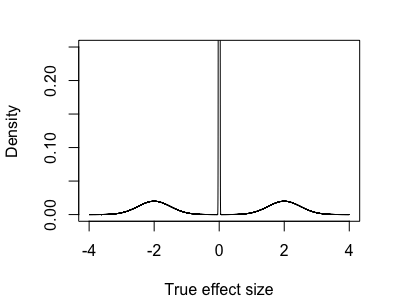

On the other hand, maybe most variants have no effect at all, or very small effects, and a few have large effects - something like:

Here it definitely makes sense to try to call some effects as 'nonzero' - with enough data we ought to be able to distinguish those effects out in the tails.

In many problems - such as looking for genetic variants that affect gene expression - it seems likely though that the real distribution might be a kind of mixture between these two. For example there might be:

lots of variants across the genome that don't affect expression at all

a small number of variants in gene promoter regions that strongly affect expression.

a number of more variants in more distant enhancer regions, that might perhaps have substantial but more modest effects.

and of course expression is affected by many other factors, such as chromatin accessibility and the availability of relevant transcription factors. So in principle there might be a large set of variants in other parts of the genome that also affect expression to a more limited extent.

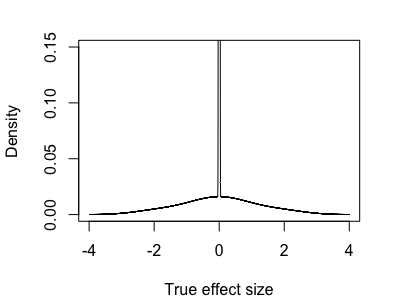

So the true distribution of real effects might look something like:

In this case we are in a kind of half-way house - it sort of makes sense to try to distinguish the zero effects from the nonzero ones, but we have to be aware we'll also be calling many small-but-nonzero effects as zero as we do it.

With this warning aside, for the rest of this page I'll assume we are happy to try to ask whether is nonzero - that is, we are assuming a 'mixture' model where some effects are zero and some aren't. To make this concrete, we'll assume:

that truly nonzero effects come along at some frequency , and

The strength of the truly nonzero effects are distributed according to some distribution .

(For example, the mixtures depicted above had 95% of the mass on the 'spike' near zero and $5% on a diffuse distribution centred at zero.)

With this model, it makes sense to try to find those truly nonzero effects.

How small a p-value is needed to be convinced?

So we've done our study and got a P-value. How small does this need to be before we can be reasonably convinced our effect is nonzero?

A standard way to approach this is to seek a threshold such that if the p-value is smaller than the threshold

then the effect is likely to be genuinely nonzero - that is, we would like

to be large.

The expression above can be computed this using Bayes' rule:

The terms on in the above formula have specific names:

is the statistical power - it's the chance of detecting a truly nonzero effect.

is the probability* that the effect is truly nonzero before we've seen any data. Recall that in our mixture model of effects this was denoted .

If we include these and expand the denominator we get the key formula for p-values:

Key formula (probability version)

Note. That still looks a bit complex. A slightly simpler version can be given by working on the odds scale instad of the probability scale. (Remember that if is a probability, the odds correponding to is ). On the odds scale the formula becomes:

Key formula (odds version)

where

This formula makes clear that choice of a reasonable threshold for the P-value depends on the interplay between the study power and the prior probability (or odds) of the effect.

Given that we are happy to assume the mixture model of effects outlined above (that is, such that the question 'is ' makes sense), the formula tells us how to pick p-value thresholds to have good condfidence that our 'discoveries' represent real associations.

Note

The left hand side of the key formula can be called the "posterior probability (or odds) of association" given .

Another name for it is the positive true discovery rate given . This naming comes about by analogy to the 'positive false discovery rate' which is effectively defined as one minus the quantity above:

Interpreting evidence

The key formula gives us a way to pick a sensible p-value threshold . To apply it you have to know two things:

the power of the test

the prior probability

There are three ways to work these out. Either

- you can make them up.

- you can learn them from other, previously published datasets.

- or you can try to learn them from the dataset itself.

All three of these approaches can be useful.

Making up is easy to do

Example: the WTCCC GWAS paper

The 'key formula' above appears in Box 1 of the original Wellcome Trust Case-Control Consortium paper, which was one of the earliest large genome-wide association studies. It was used to pick a threshold of for following up on putative genetic associations.

Here's how the calculation was presented. The authors supposed there might be, heuristically, around a million 'linkage disequilibrium blocks' in the human genome. (A linkage disequilibrium block is a region of the genome, perhaps bordered by recombination hotspots, over which common genetic variants have become correlated due to ancestral processes such as genetic drift). And they supposed that, perhaps, 10 such regions in the genome might be genuinely associated with a given common disease - giving an approximate prior odds of

If the power were around 50% then this would give

If then

So - if the assumptions are right - should give us a posterior odds of 10, or a posterior probability of about a 90% that the association is truly nonzero.

Now obviously these values are made up - the prior probability estimate could have been wildly out of range, and the true power wasn't known either. Nevertheless, this heuristic calculation, which was based on the core hypothesis about the genetic architecture of common diseases, provided a useful and defensible threshold. In practice it worked well and led to key new findings which were later replicated in other studies.

Estimating false discovery rates

John Storey's 2001 paper introduced the idea that - if we have conducted lots of similar tests - the quantities and the power can be estimated from the data itself. The proposed approach goes something like this. Suppose we have conducted tests (of independent hypotheses) with P-values . To apply our formula, we'd need to know both the power and the true proportion of true positives. However, if is large we might be able to estimate them as follows:

First, tests with large p-values (say larger than ) probably reflect the null model - i.e. those where the true effect is zero. Also, P-values for null tests should be uniformly distributed. So, we could estimate as the excess in small p-values over what we'd predict from the number of large p-values.

So, if there are null p-values greater than , then we'd estimate a total of null p-values in total, and the excess is:

Second, we can't estimate the power , because we don't know which p-values come from truly nonzero s. But we could replace it with , in other words:

Combining with the above estimate of gives

Plugging these in leads to the 'positive false discovery rate' estimate:

Of course the corresponding true discovery rate is one minus this quantity.

Storey's FDR method therefore provides a way to estimate the key quantities in the key formula from the data itself - provided we have lots of data to work with.